- Windows Azure Storage Explorer Download

- Azure Data Explorer Storage Account

- Azure Data Explorer Download

- Azure Data Explorer Blob Storage

- Querying the data from 3 different blobs in order to see the data that was stored. Once the data is in storage, it is still possible to query the day but in a smaller capacity. Querying the data now uses an operator called ‘externaldata’ which requires you to use a SAS token URL generated by the Blob in order to pull the data from it.

- Ingest data into the Azure Data Explorer The.ingest into table command can read the data from an Azure Blob or Azure Data Lake Storage and import the data into the cluster. This means it is ingesting the data and stores it locally for a better performance. Authentication is done with Azure SaS Tokens.

Azure Data Lake Storage is a highly scalable and cost-effective data lake solution for big data analytics. It combines the power of a high-performance file system with massive scale and economy to help you reduce your time to insight. Data Lake Storage Gen2 extends Azure Blob Storage capabilities and is optimized for analytics workloads.

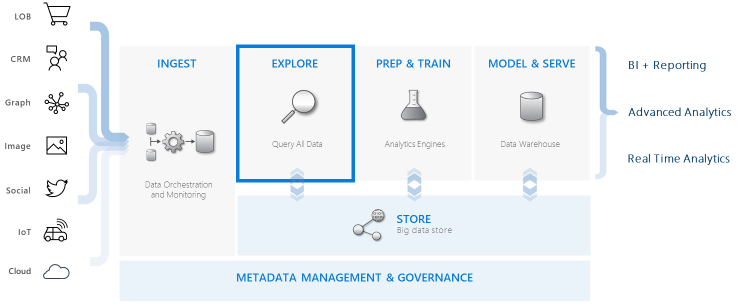

Send data directly to Azure Sentinel and ADX in parallel. You may want to retain any data with security value in Azure Sentinel to use in detections, incident investigations, threat hunting, UEBA, and so on. Keeping this data in Azure Sentinel mainly benefits Security Operations Center (SOC) users, where typically, 3-12 months of storage are enough. Azure Data Explorer integrates with other major services to provide an end-to-end solution that includes data collection, ingestion, storage, indexing, querying, and visualization. It has a pivotal role in the data warehousing flow by executing the EXPLORE step of the flow on terabytes of diverse raw data.

Azure Data Explorer integrates with Azure Blob Storage and Azure Data Lake Storage (Gen1 and Gen2), providing fast, cached, and indexed access to data stored in external storage. You can analyze and query data without prior ingestion into Azure Data Explorer. You can also query across ingested and uningested external data simultaneously.

Tip

The best query performance necessitates data ingestion into Azure Data Explorer. The capability to query external data without prior ingestion should only be used for historical data or data that are rarely queried. Optimize your external data query performance for best results.

Create an external table

Let's say you have lots of CSV files containing historical info on products stored in a warehouse, and you want to do a quick analysis to find the five most popular products from last year. In this example, the CSV files look like:

| Timestamp | ProductId | ProductDescription |

|---|---|---|

| 2019-01-01 11:21:00 | TO6050 | 3.5in DS/HD Floppy Disk |

| 2019-01-01 11:30:55 | YDX1 | Yamaha DX1 Synthesizer |

| ... | ... | ... |

The files are stored in Azure Blob storage mycompanystorage under a container named archivedproducts, partitioned by date:

To run a KQL query on these CSV files directly, use the .create external table command to define an external table in Azure Data Explorer. For more information on external table create command options, see external table commands.

The external table is now visible in the left pane of the Web UI:

External table permissions

- The database user can create an external table. The table creator automatically becomes the table administrator.

- The cluster, database, or table administrator can edit an existing table.

- Any database user or reader can query an external table.

Querying an external table

Once an external table is defined, the external_table() function can be used to refer to it. The rest of the query is standard Kusto Query Language.

Querying external and ingested data together

You can query both external tables and ingested data tables within the same query. You can join or union the external table with additional data from Azure Data Explorer, SQL servers, or other sources. Use a let( ) statement to assign a shorthand name to an external table reference.

In the example below, Products is an ingested data table and ArchivedProducts is an external table that we've defined previously:

Querying hierarchical data formats

Azure Data Explorer allows querying hierarchical formats, such as JSON, Parquet, Avro, and ORC. To map hierarchical data schema to an external table schema (if it's different), use external table mappings commands. For instance, if you want to query JSON log files with the following format:

The external table definition looks like this:

Define a JSON mapping that maps data fields to external table definition fields:

When you query the external table, the mapping will be invoked, and relevant data will be mapped to the external table columns:

For more info on mapping syntax, see data mappings.

Query TaxiRides external table in the help cluster

Use the test cluster called help to try out different Azure Data Explorer capabilities. The help cluster contains an external table definition for a New York City taxi dataset containing billions of taxi rides.

Create external table TaxiRides

This section shows the query used to create the TaxiRides external table in the help cluster. Since this table has already been created, you can skip this section and go directly to query TaxiRides external table data.

You can find the created TaxiRides table by looking at the left pane of the Web UI:

Query TaxiRides external table data

Sign in to https://dataexplorer.azure.com/clusters/help/databases/Samples.

Query TaxiRides external table without partitioning

Run this query on the external table TaxiRides to show rides for each day of the week, across the entire data set.

This query shows the busiest day of the week. Since the data isn't partitioned, the query may take up to several minutes to return results.

Query TaxiRides external table with partitioning

Run this query on the external table TaxiRides to show taxi cab types (yellow or green) used in January of 2017.

This query uses partitioning, which optimizes query time and performance. The query filters on a partitioned column (pickup_datetime) and returns results in a few seconds.

You can write additional queries to run on the external table TaxiRides and learn more about the data.

Optimize your query performance

Optimize your query performance in the lake by using the following best practices for querying external data.

Data format

- Use a columnar format for analytical queries, for the following reasons:

- Only the columns relevant to a query can be read.

- Column encoding techniques can reduce data size significantly.

- Azure Data Explorer supports Parquet and ORC columnar formats. Parquet format is suggested because of optimized implementation.

Azure region

Check that external data is in the same Azure region as your Azure Data Explorer cluster. This setup reduces cost and data fetch time.

File size

The optimal file size is hundreds of Mb (up to 1 GB) per file. Avoid many small files that require unneeded overhead, such as slower file enumeration process and limited use of columnar format. The number of files should be greater than the number of CPU cores in your Azure Data Explorer cluster.

Compression

Use compression to reduce the amount of data being fetched from the remote storage. For Parquet format, use the internal Parquet compression mechanism that compresses column groups separately, allowing you to read them separately. To validate use of compression mechanism, check that the files are named as follows: <filename>.gz.parquet or <filename>.snappy.parquet and not <filename>.parquet.gz.

Partitioning

Organize your data using 'folder' partitions that enable the query to skip irrelevant paths. When planning partitioning, consider file size and common filters in your queries such as timestamp or tenant ID.

VM size

Select VM SKUs with more cores and higher network throughput (memory is less important). For more information, see Select the correct VM SKU for your Azure Data Explorer cluster.

Next steps

- Query your data in the Azure Data Lake using Azure Data Explorer. Learn to write queries and derive additional insights from your data.

By default, logs ingested into Azure Sentinel are stored in Azure Monitor Log Analytics. This article explains how to reduce retention costs in Azure Sentinel by sending them to Azure Data Explorer (ADX) for long-term retention.

Storing logs in ADX reduces costs while retains your ability to query your data, and is especially useful as your data grows. For example, while security data may lose value over time, you may be required to retain logs for regulatory requirements or to run periodic investigations on older data.

About Azure Data Explorer

ADX is a big data analytics platform that is highly optimized for log and data analytics. Since ADX uses Kusto Query Language (KQL) as its query language, it's a good alternative for Azure Sentinel data storage. Using ADX for your data storage enables you to run cross-platform queries and visualize data across both ADX and Azure Sentinel.

For more information, see the ADX documentation and blog.

When to integrate with ADX

Azure Sentinel provides full SIEM and SOAR capabilities, quick deployment and configuration, as well as advanced, built-in security features for SOC teams. However, the value of storing security data in Azure Sentinel may drop after a few months, once SOC users don't need to access it as often as they access newer data.

If you only need to access specific tables occasionally, such as for periodic investigations or audits, you may consider that retaining your data in Azure Sentinel is no longer cost-effective. At this point, we recommend storing data in ADX, which costs less, but still enables you to explore using the same KQL queries that you run in Azure Sentinel.

You can access the data in ADX directly from Azure Sentinel using the Log Analytics ADX proxy feature. To do so, use cross cluster queries in your log search or workbooks.

Important

Core SIEM capabilities, including Analytic rules, UEBA, and the investigation graph, do not support data stored in ADX.

Note

Windows Azure Storage Explorer Download

Integrating with ADX can also enable you to have control and granularity in your data. For more information, see Design considerations.

Send data directly to Azure Sentinel and ADX in parallel

You may want to retain any data with security value in Azure Sentinel to use in detections, incident investigations, threat hunting, UEBA, and so on. Keeping this data in Azure Sentinel mainly benefits Security Operations Center (SOC) users, where typically, 3-12 months of storage are enough.

You can also configure all of your data, regardless of its security value, to be sent to ADX at the same time, where you can store it for longer. While sending data to both Azure Sentinel and ADX at the same time results in some duplication, the cost savings can be significant as you reduce the retention costs in Azure Sentinel.

Tip

This option also enables you to correlate data spread across data stores, such as to enrich the security data stored in Azure Sentinel with operational or long-term data stored in ADX. For more information, see Cross-resource query Azure Data Explorer by using Azure Monitor.

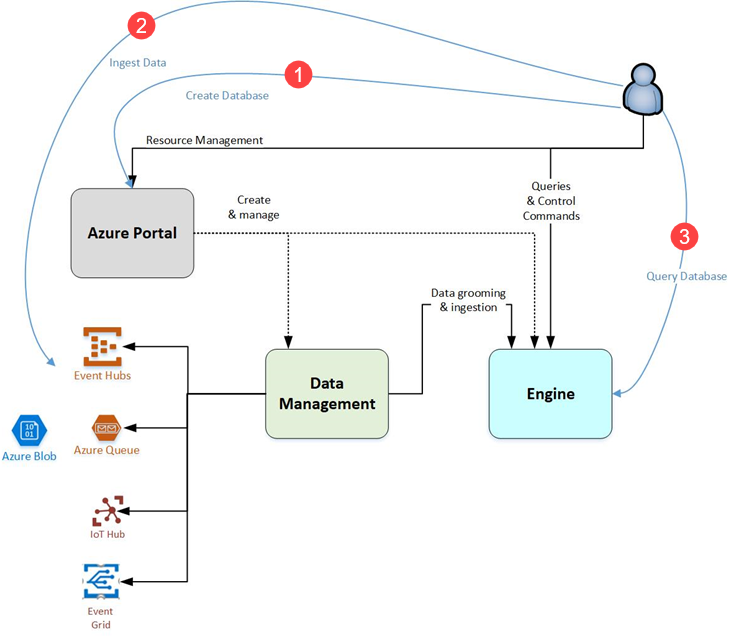

The following image shows how you can retain all of your data in ADX, while sending only your security data to Azure Sentinel for daily use.

For more information about implementing this architecture option, see Azure Data Explorer monitoring.

Export data from Log Analytics into ADX

Instead of sending your data directly to ADX, you can choose to export your data from Log Analytics into ADX via an Azure Event Hub or Azure Data Factory.

Data export architecture

Azure Data Explorer Storage Account

The following image shows a sample flow of exported data through the Azure Monitor ingestion pipeline. Your data is directed to Log Analytics by default, but you can also configure it to export to an Azure Storage Account or Event Hub.

When configuring the data export rules, select the types of logs you want to export. Once configured, new data arriving at the Log Analytics ingestion endpoint, and targeted to your workspace for the selected tables, is exported to your Storage Account or Event hub.

When configuring data for export, note the following considerations:

| Consideration | Details |

|---|---|

| Scope of data exported | Once export is configured for a specific table, all data sent to that table is exported, with no exception. Exported a filtered subset of your data, or limiting the export to specific events, is not supported. |

| Location requirements | Both the Azure Monitor / Azure Sentinel workspace, and the destination location (an Azure Storage Account or Event Hub) must be located in the same geographical region. |

| Supported tables | Not all tables are supported for export, such as custom log tables, which are not supported. For more information, see Log Analytics workspace data export in Azure Monitor and the list of supported tables. |

Data export methods and procedures

Use one of the following procedures to export data from Azure Sentinel into ADX:

Via an Azure Event Hub. Export data from Log Analytics into an Event Hub, where you can ingest it into ADX. This method stores some data (the first X months) in both Azure Sentinel and ADX.

Via Azure Storage and Azure Data Factory. Export your data from Log Analytics into Azure Blob Storage, then Azure Data Factory is used to run a periodic copy job to further export the data into ADX. This method enables you to copy data from Azure Data Factory only when it nears its retention limit in Azure Sentinel / Log Analytics, avoiding duplication.

This section describes how to export Azure Sentinel data from Log Analytics into an Event Hub, where you can ingest it into ADX. Similar to sending data directly to Azure Sentinel and ADX in parallel, this method includes some data duplication as the data is streamed into ADX as it arrives in Log Analytics.

The following image shows a sample flow of exported data into an Event Hub, from where it's ingested into ADX.

The architecture shown in the previous image provides the full Azure Sentinel SIEM experience, including incident management, visual investigations, threat hunting, advanced visualizations, UEBA, and more, for data that must be accessed frequently, every X months. At the same time, this architecture also enables you to query long-term data by accessing it directly in ADX, or via Azure Sentinel thanks to the ADX proxy feature. Queries to long-term data storage in ADX can be ported without any changes from Azure Sentinel to ADX.

Note

When exporting multiple data tables into ADX via Event Hub, keep in mind that Log Analytics data export has limitations for the maximum number of Event Hubs per namespace. For more information about data export Log Analytics workspace data export in Azure Monitor.

For most customers, we recommend using the Event Hub Standard tier. Depending on the amount of tables you need to export and the amount of traffic to those tables, you may need to use Event Hub Dedicated tier. For more information, see Event Hub documentation.

Tip

For more information about this procedure, see Tutorial: Ingest and query monitoring data in Azure Data Explorer.

To export data into ADX via an Event Hub:

Configure the Log Analytics data export to an Event Hub. For more information, see Log Analytics workspace data export in Azure Monitor.

Create an ADX cluster and database. For more information, see:

Create target tables. The raw data is first ingested to an intermediate table, where the raw data is stored, manipulated, and expanded.

An update policy, which is similar to a function applied to all new data, is used to ingest the expanded data into the final table, which has the same schema as the original table in Azure Sentinel.

Set the retention on the raw table to 0 days. The data is stored only in the properly formatted table, and deleted in the raw table as soon as it's transformed.

For more information, see Ingest and query monitoring data in Azure Data Explorer.

Create table mapping. Map the JSON tables to define how records land in the raw events table as they come in from an Event Hub. For more information, see Create the update policy for metric and log data.

Create an update policy and attach it to the raw records table. In this step, create a function, called an update policy, and attach it to the destination table so that the data is transformed at ingestion time.

Note

This step is required only when you want to have data tables in ADX with the same schema and format as in Azure Sentinel.

For more information, see Connect an Event Hub to Azure Data Explorer.

Create a data connection between the Event Hub and the raw data table in ADX. Configure ADX with details of how to export the data into the Event Hub.

Use the instructions in the Azure Data Explorer documentation and specify the following details:

- Target. Specify the specific table with the raw data.

- Format. Specify

.jsonas the table format. - Mapping to be applied. Specify the mapping table created in step 4 above.

Modify retention for the target table. The default Azure Data Explorer retention policy may be far longer than you need.

Use the following command to update the retention policy to one year:

This section describes how to export Azure Sentinel data from Log Analytics into Azure Storage, where Azure Data Factory can run a regular job to export the data into ADX.

Using Azure Storage and Azure Data Factory enables you to copy data from Azure Storage only when it's close to the retention limit in Azure Sentinel / Log Analytics. There is no data duplication, and ADX is used only to access data that's older than the retention limit in Azure Sentinel.

Tip

While the architecture for using Azure Storage and Azure Data Factory for your legacy data is more complex, this method can offer larger cost savings overall.

The following image shows a sample flow of exported data into an Azure Storage, from where Azure Data Factory runs a regular job to further export it into ADX.

To export data into ADX via an Azure Storage and Azure Data Factory:

Configure the Log Analytics data export to an Event Hub. For more information, see Log Analytics workspace data export in Azure Monitor.

Create an ADX cluster and database. For more information, see:

Create target tables. The raw data is first ingested to an intermediate table, where the raw data is stored, manipulated, and expanded.

An update policy, which is similar to a function applied to all new data, is used to ingest the expanded data into the final table, which has the same schema as the original table in Azure Sentinel.

Set the retention on the raw table to 0 days. The data is stored only in the properly formatted table, and deleted in the raw table as soon as it's transformed.

For more information, see Ingest and query monitoring data in Azure Data Explorer.

Create table mapping. Map the JSON tables to define how records land in the raw events table as they come in from an Event Hub. For more information, see Create the update policy for metric and log data.

Create an update policy and attach it to the raw records table. In this step, create a function, called an update policy, and attach it to the destination table so that the data is transformed at ingestion time.

Note

This step is required only when you want to have data tables in ADX with the same schema and format as in Azure Sentinel.

For more information, see Connect an Event Hub to Azure Data Explorer.

Create a data connection between the Event Hub and the raw data table in ADX. Configure ADX with details of how to export the data into the Event Hub.

Use the instructions in the Azure Data Explorer documentation and specify the following details:

- Target. Specify the specific table with the raw data.

- Format. Specify

.jsonas the table format. - Mapping to be applied. Specify the mapping table created in step 4 above.

Set up the Azure Data Factory pipeline:

Create linked services for Azure Storage and Azure Data Explorer. For more information, see:

- Copy data to or from Azure Data Explorer by using Azure Data Factory.

Create a dataset from Azure Storage. For more information, see Datasets in Azure Data Factory.

Create a data pipeline with a copy operation, based on the LastModifiedDate properties.

For more information, see Copy new and changed files by LastModifiedDate with Azure Data Factory.

Design considerations

Azure Data Explorer Download

When storing your Azure Sentinel data in ADX, consider the following elements:

| Consideration | Description |

|---|---|

| Cluster size and SKU | Plan carefully for the number of nodes and the VM SKU in your cluster. These factors will determine the amount of processing power and the size of your hot cache (SSD and memory). The bigger the cache, the more data you will be able to query at a higher performance. We encourage you to visit the ADX sizing calculator, where you can play with different configurations and see the resulting cost. ADX also has an autoscale capability that makes intelligent decisions to add/remove nodes as needed based on cluster load. For more information, see Manage cluster horizontal scaling (scale out) in Azure Data Explorer to accommodate changing demand. |

| Hot/cold cache | ADX provides control over the data tables that are in hot cache, and return results faster. If you have large amounts of data in your ADX cluster, you may want to break down tables by month, so that you have greater granularity on the data that's present in your hot cache. For more information, see Cache policy (hot and cold cache) |

| Retention | In ADX, you can configure when data is removed from a database or an individual table, which is also an important part of limiting storage costs. For more information, see Retention policy. |

| Security | Several ADX settings can help you protect your data, such as identity management, encryption, and so on. Specifically for role-based access control (RBAC), ADX can be configured to restrict access to databases, tables, or even rows within a table. For more information, see Security in Azure Data Explorer and Row level security. |

| Data sharing | ADX allows you to make pieces of data available to other parties, such as partners or vendors, and even buy data from other parties. For more information, see Use Azure Data Share to share data with Azure Data Explorer. |

| Other cost components | Consider the other cost components for the following methods: Exporting data via an Azure Event Hub: - Log Analytics data export costs, charged per exported GBs. - Event hub costs, charged by throughput unit. Export data via Azure Storage and Azure Data Factory: - Log Analytics data export, charged per exported GBs. - Azure Storage, charged by GBs stored. - Azure Data Factory, charged per copy of activities run. |

Next steps

Regardless of where you store your data, continue hunting and investigating using Azure Sentinel.

Azure Data Explorer Blob Storage

For more information, see: